Create Spark Scala applications using HDInsight Tools in Azure Toolkit for Intelli. JThis article provides step- by- step guidance on developing Spark applications written in Scala and submitting it to an HDInsight Spark cluster using HDInsight Tools in Azure Toolkit for Intelli.

Welcome to the NetBeans Plugin Portal. Download, comment, and rate plugins provided by community members and third-party companies, or post your own.

This article provides step-by-step guidance on developing Spark applications written in Scala and submitting it to an HDInsight Spark cluster using. Hello, I am using SBT plugin(v. 1.5.0) to run a Play application with IntelliJ. I run the app using run command inside the SBT Console. In order to stop the Play app. You can find the installer download links for other operating systems, as well as documentation and source code archives for Scala 2.10.5 below. IntelliJ IDEA analyzes your code, looking for connections between symbols across all project files and languages. Using this information it provides indepth coding. Every aspect of IntelliJ IDEA is specifically designed to maximize developer productivity. Together, powerful static code analysis and ergonomic design make.

J. You can use the tools in a few different ways: To develop and submit a Scala Spark application on an HDInsight Spark cluster. To access your Azure HDInsight Spark cluster resources. To develop and run a Scala Spark application locally. You can also follow a video here to get you started. Important: This tool can be used to create and submit applications only for an HDInsight Spark cluster on Linux. Prerequisites. Install HDInsight Tools in Azure Toolkit for Intelli. JHDInsight tools for Intelli.

J is available as part of the Azure Toolkit for Intelli. J. For instructions on how to install the Azure Toolkit, see Installing the Azure Toolkit for Intelli. J. Run a Spark Scala application on an HDInsight Spark cluster. Launch Intelli. J IDEA and create a new project.

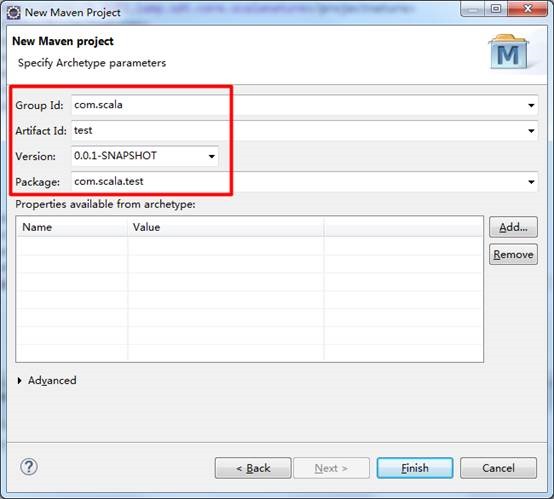

In the new project dialog box, make the following choices, and then click Next. From the left pane, select HDInsight. From the right pane, select Spark on HDInsight (Scala). Click Next. In the next window, provide the project details.

Provide a project name and project location. For Project SDK, make sure you provide a Java version greater than 7. For Scala SDK, click Create, click Download, and then select the version of Scala to use. Make sure you do not use version 2.

This sample uses version 2. For Spark SDK, download and use the SDK from here. You can also ignore this and use the Spark Maven repository instead, however please make sure you have the right maven repository installed to develop your Spark applications. For example, you need to make sure you have the Spark Streaming part installed if you are using Spark Streaming; Also please make sure you are using the repository marked as Scala 2.

Scala 2. 1. 1.)Click Finish. The Spark project will automatically create an artifact for you. To see the artifact, follow these steps. From the File menu, click Project Structure. In the Project Structure dialog box, click Artifacts to see the default artifact that is created. You can also create your own artifact bly clicking on the + icon, highlighted in the image above.

In the Project Structure dialog box, click Project. If the Project SDK is set to 1. Project language level is set to 7 - Diamonds, ARM, multi- catch, etc. Add your application source code. From the Project Explorer, right- click src, point to New, and then click Scala class. In the Create New Scala Class dialog box, provide a name, for Kind select Object, and then click OK.

In the My. Cluster. App. scala file, paste the following code. This code reads the data from the HVAC. HDInsight Spark clusters), retrieves the rows that only have one digit in the seventh column in the CSV, and writes the output to /HVACOut under the default storage container for the cluster.

Spark. Conf. import org. Spark. Context. object My.

Cluster. App{. def main (arg: Array[String]): Unit = {. Spark. Conf(). set. App. Name("My. Cluster.

App"). val sc = new Spark. Context(conf). val rdd = sc. File("wasbs: ///Hdi. Samples/Hdi. Samples/Sensor. Sample. Data/hvac/HVAC.

CSV. val rdd. 1 = rdd. As. Text. File("wasbs: ///HVACOut"). Run the application on an HDInsight Spark cluster. From the Project Explorer, right- click the project name, and then select Submit Spark Application to HDInsight.

You will be prompted to enter your Azure subscription credentials. In the Spark Submission dialog box, provide the following values. For Spark clusters (Linux only), select the HDInsight Spark cluster on which you want to run your application. You need either select an Artifact from the Intelli.

J project, or select one from hard disk. Against the Main class name text box, click the ellipsis ( ), select the main class in your application source code, and then click OK. Because the application code in this example does not require any command line arguments or reference JARs or files, you can leave the remaining text boxes empty. After providing all the inputs, the dialog box should resemble the following. Click Submit. The Spark Submission tab at the bottom of the window should start displaying the progress.

You can also stop the application by clicking the red button in the "Spark Submission" window. In the next section, you learn how to access the job output using the HDInsight Tools in Azure Toolkit for Intelli. J. Access and manage HDInsight Spark clusters using the HDInsight Tools in Azure Toolkit for Intelli. JYou can perform a variety of operations using the HDInsight tools that are part of Azure Toolkit for Intelli. J. Access the storage container for the cluster. From the View menu, point to Tool Windows, and then click HDInsight Explorer.

If prompted, enter the credentials to access your Azure subscription. Expand HDInsight root node to see a list of HDInsight Spark clusters that are available. Expand the cluster name to see the storage account and the default storage container for the cluster. Click the storage container name associated with the cluster. In the right- pane, you should see a folder called HVACOut. Double- click to open the folder and you will see part- * files.

Open one of those files to see the output of the application. Access the job view directly from the HDInsight tools.

From the HDInsight Explorer, expand the Spark cluster name, and then click Jobs. In the right pane, the Spark Job View tab displays all the applications that were run on the cluster. Click the application name for which you want to see more details. The boxes for Error Message, Job Output, Livy Job Logs, and Spark Driver Logs are populated based on the application you select. You can also open the Spark History UI and the YARN UI (at the application level) by clicking the respective buttons at the top of the screen. Access the Spark History Server. From the HDInsight Explorer, right- click your Spark cluster name and then select Open Spark History UI.

When prompted, enter the admin credentials for the cluster. You must have specified these while provisioning the cluster. In the Spark History Server dashboard, you can look for the application you just finished running by using the application name. In the code above, you set the application name using val conf = new Spark. Conf(). set. App. Name("My. Cluster. App"). Hence, your Spark application name was My.

Cluster. App. Launch the Ambari portal. From the HDInsight Explorer, right- click your Spark cluster name and then select Open Cluster Management Portal (Ambari).

When prompted, enter the admin credentials for the cluster. You must have specified these while provisioning the cluster. Manage Azure subscriptions. By default, the HDInsight tools lists the Spark clusters from all your Azure subscriptions. If required, you can specify the subscriptions for which you want to access the cluster.

From the HDInsight Explorer, right- click the HDInsight root node, and then click Manage Subscriptions. From the dialog box, clear the check boxes against the subscription that you do not want to access and then click Close. You can also click Sign Out if you want to log off from your Azure subscription. Run a Spark Scala application locally.

You can use the HDInsight Tools in Azure Toolkit for Intelli. J to run Spark Scala applications locally on your workstation. Typically, such applications do not need access to cluster resources such as storage container and can be run and tested locally. Prerequisite. While running the local Spark Scala application on a Windows computer, you might get an exception as explained in SPARK- 2. Win. Utils. exe on Windows.

To work around this error, you must download the executable from here to a location like C: \Win. Utils\bin. You must then add an environment variable HADOOP_HOME and set the value of the variable to C\Win. Utils. Run a local Spark Scala application.

Launch Intelli. J IDEA and create a new project. In the new project dialog box, make the following choices, and then click Next. From the left pane, select HDInsight.

From the right pane, select Spark on HDInsight Local Run Sample (Scala). Click Next. In the next window, provide the project details. Provide a project name and project location. For Project SDK, make sure you provide a Java version greater than 7. For Scala SDK, click Create, click Download, and then select the version of Scala to use.

Make sure you do not use version 2. This sample uses version 2. For Spark SDK, download and use the SDK from here. You can also ignore this and use the Spark Maven repository instead, however please make sure you have the right maven repository installed to develop your Spark applications. For example, you need to make sure you have the Spark Streaming part installed if you are using Spark Streaming; Also please make sure you are using the repository marked as Scala 2.

Scala 2. 1. 1.)Click Finish. The template adds a sample code (Log. Query) under the src folder that you can run locally on your computer. Right click on the Log. Query application, and then click "Run 'Log. Query'". You will see an output like this in the Run tab at the bottom. Convert existing Intelli.

J IDEA applications to use the HDInsight Tools in Azure Toolkit for Intelli. JYou can also convert your existing Spark Scala applications created in Intelli. J IDEA to be compatible with the HDInsight Tools in Azure Toolkit for Intelli. J. This will enable you to use the tool to submit the applications to an HDInsight Spark cluster. You can do so by performing the following steps: For an existing Spark Scala appliction created using Intelli. J IDEA, open the associated . At the root level, you will see a module element like this: < module org.

Maven. Projects. Manager. Maven. Module="true" type="JAVA_MODULE" version="4">. Edit the element to add Unique. Key="HDInsight. Tool" so that the module element looks like the following: < module org.

Maven. Projects. Manager.

Intelli. J IDEA - Wikipedia, the free encyclopedia. Intelli. J IDEA (pronounced /ЙЄn. Л€t. Й›l. ЙЄ j a.

ЙЄЛ€d. ЙЄЙ™/) is a Javaintegrated development environment (IDE) for developing computer software. It is developed by Jet. Brains (formerly known as Intelli. J), and is available as an Apache 2 Licensed community edition,[1] and in a proprietary commercial edition. Both can be used for commercial development.[2]History[edit]The first version of Intelli. J IDEA was released in January 2.

Java IDEs with advanced code navigation and code refactoring capabilities integrated.[3][4]In a 2. Infoworld report, Intelli. J received the highest test center score out of the four top Java programming tools: Eclipse, Intelli. J IDEA, Net. Beans and JDeveloper.[5]In December 2. Google announced version 1. Android Studio, an open source IDE for Android apps, based on the open source community edition of Intelli. J IDEA.[6] Other development environments based on Intelli.

J's framework include App. Code, CLion, Php. Storm, Py. Charm, Ruby. Mine, Web. Storm, and MPS.[7]System requirements[8][edit]Windows. OS XLinux. OS Version.

Microsoft Windows 1. Vista/2. 00. 3/XP (incl. Mac OS X 1. 0. 5 or higher, up to 1. El Capitan)GNOME or KDE desktop. RAM1 GB RAM minimum, 2 GB RAM recommended. Disk space. 30. 0 MB hard disk space + at least 1 GB for caches.

JDK Version. JDK 1. Screen resolution. Г—7. 68 minimum screen resolution.

Features[edit]Version 1. Java 8, UI designer for Android development, Play 2. Scala. Coding assistance[edit]The IDE provides certain features[1. Built in tools and integration[edit]The IDE provides[1. SBT. It supports version control systems like GIT, Mercurial, Perforce, and SVN. Databases like Microsoft SQL Server, ORACLE, Postgre.

SQL, and My. SQL can be accessed directly from the IDE. Plugin ecosystem[edit]Intelli. J supports plugins through which one can add additional functionality to the IDE. One can download and install plugins either from Intelli. J's plugin repository website or through IDE's inbuilt plugin search and install feature. Currently Intelli. J IDEA Community edition has 1.

Ultimate edition has 1. Supported languages[edit]The Community and Ultimate editions differ in their support for working with different programming languages as per the below table.[1. Technologies and frameworks[edit]Community Edition supports the following: [1. Ultimate Edition supports the following: [1.

Ultimate Edition also supports the application servers. Geronimo, Glass. Fish, JBoss, Jetty, Tomcat, Weblogic, and Web. Sphere.[1. 3]There are free plugins from Atlassian for Intelli. J to integrate with JIRA,[1. Bamboo, Crucible and Fish. Eye. Software versioning and revision control[edit]The two editions also differ in their support[1. See also[edit]Further reading[edit]Bibliography[edit]Saunders, Stephen; Fields, Duane K.; Belayev, Eugene (March 1, 2.

Intelli. J IDEA in Action (1st ed.), Manning, p. 4. ISBN 1- 9. 32. 39. Davydov, S.; Efimov, A. May 2. 00. 5), Intelli. J IDEA. Professional'noe programmirovanie na Java (V podlinnike) (1st ed.), BHV, p. 8. ISBN 5- 9. 41. 57- 6.

References[edit]External links[edit].

RSS Feed

RSS Feed